Member-only story

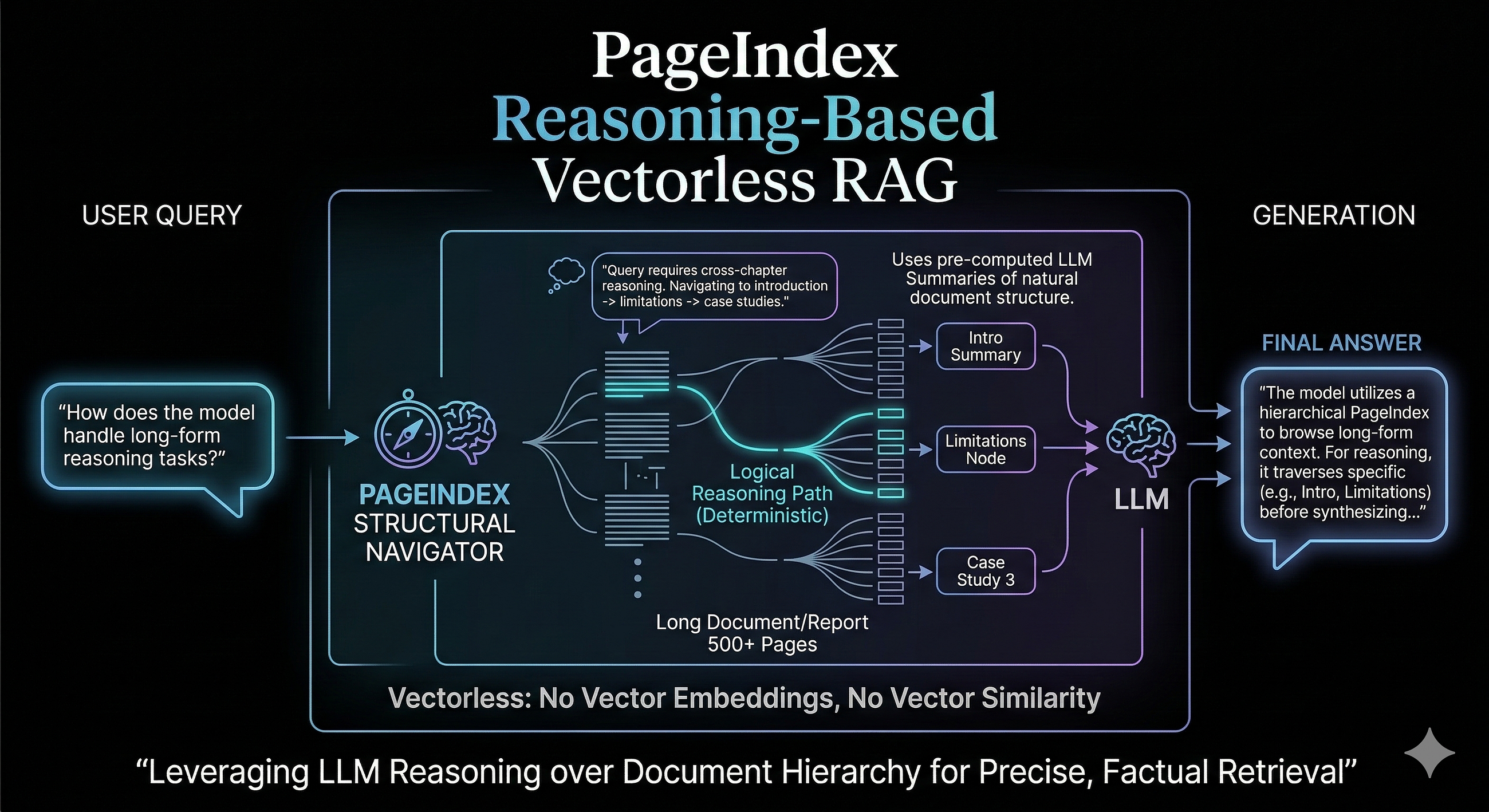

PageIndex Reasoning Based Vectorless RAG

A new architectural pattern in building RAG applications

Jessica Saini5 min read·2 days ago

Jessica Saini5 min read·2 days ago--

PageIndex Vectorless RAG is a retrieval augmented generation approach that retrieves relevant content without using vector embeddings or a vector database at all.

Vector embeddings are a key component in driving costs when building a scalable RAG based system. One of the key issues with the traditional RAG is retrieval quality. For a given user query, a traditional RAG may fail to give the right answer using semantic similarity search. The traditional RAG works in the following way:

Query → Embed → Vector Search → Retrieve → Generate

- Query: User asks a question.

- Embed: An embedding model turns text into a vector (e.g., $[0.12, -0.05, 0.88…]$).

- Vector Search: The system looks for the closest neighbors in a high-dimensional space.

- Retrieve: Top-k most similar document chunks are pulled.

- Generate: The LLM uses those chunks to write a response.

The problem is that semantic similarity does not always means relevance. This is more prevalent for long, structured documents. The right answer is often buried in the structured document and semantic similarity may miss this structure.