Member-only story

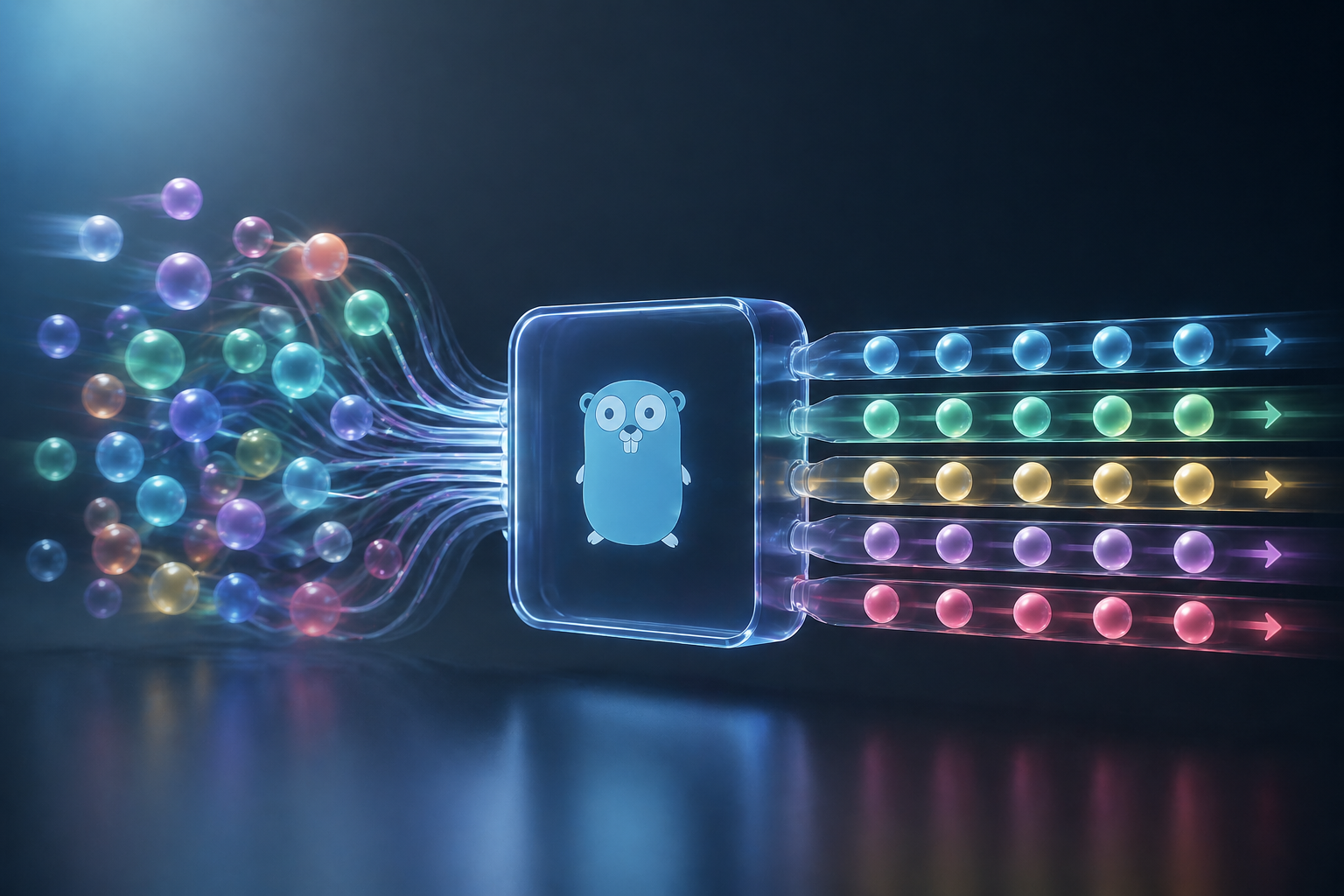

You Think Concurrency Means Parallel Execution. In Go, That’s Only Half True.

The goroutine scheduler doesn’t work the way most engineers visualize it. Understanding the difference changes how you debug production failures.

syarif8 min read·Just now

syarif8 min read·Just now--

The dashboard showed 340 active goroutines. CPU sat at around 23%. No goroutines blocked on locks, nothing stuck in I/O — at least nothing the profiler was calling out clearly. The system was technically running. Goroutines were being created, tasks were being picked up, logs were scrolling.

And yet our job queue was falling behind by roughly 4 minutes per hour. Consistently. Even after we scaled up.

The first three hypotheses were wrong. The fourth one — once we actually looked at scheduler traces instead of goroutine counts — explained everything. The system was concurrent. It just wasn’t executing the way we had drawn it on the whiteboard.

The Model That Gets You Into Trouble

When most engineers think about goroutines, they picture something like threads — lightweight, sure, but fundamentally parallel. Spin up 50 goroutines, and 50 things happen at once. That’s the mental image. Goroutines as tiny workers all running simultaneously.