Moving from Identity Verification to Structural Intent Locks in the Age of AI

A structural disconnect emerges when authenticated identity is treated as proof of legitimate intent. The gap between human understanding and machine execution defines a new class of security risk in the AI era.

Introduction — The $25.6M Anomaly

In early 2024, a multinational firm in Hong Kong lost $25.6 million after an employee attended a video conference with what appeared to be legitimate company executives — including the CFO.

Every participant looked real.

Voices matched.

Behavior appeared natural.

The employee verified identities visually and audibly, then executed the transfer through authorized internal procedures.

No passwords were stolen.

No encryption was broken.

No system intrusion occurred.

The system worked exactly as designed.

And that is precisely the problem.

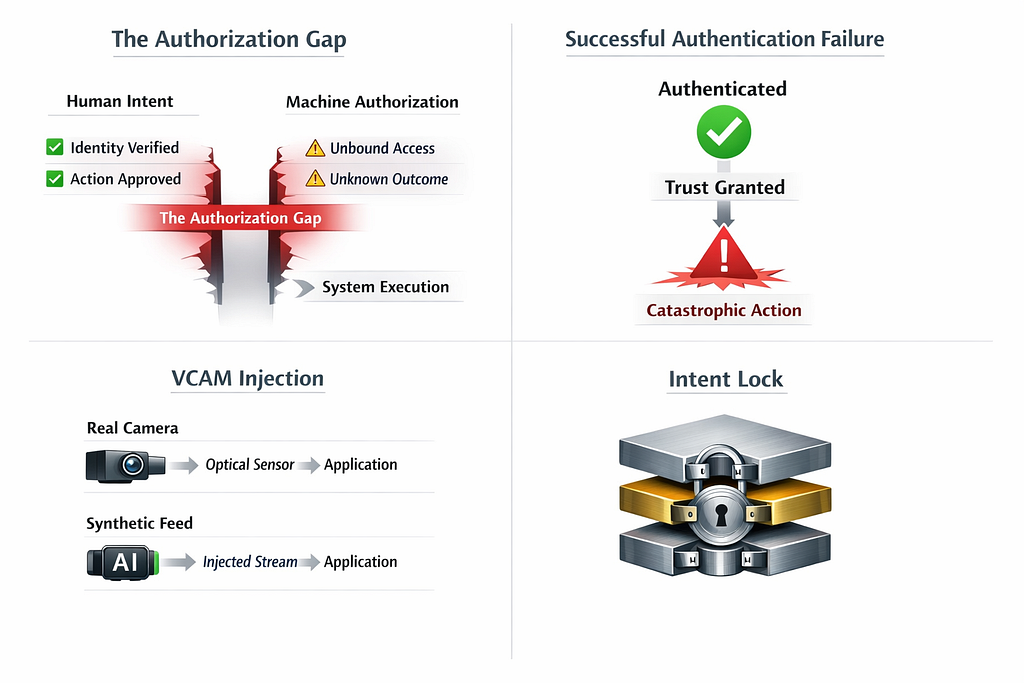

Successful authentication no longer guarantees legitimate action. The diagram illustrates four emerging architectural risks: the Authorization Gap between human intent and system execution, failure caused by over-trusted authentication, Virtual Camera (VCAM) injection replacing physical presence with synthetic input, and the proposed Intent Lock model that binds user meaning to authorized execution.

1. The Illusion of Verified Presence

Modern digital security increasingly relies on visual confirmation as a proxy for trust.

Online onboarding, remote authorization, and eKYC workflows share a common assumption:

If a person appears live on camera, physical presence is confirmed.

Forensic investigations are now dismantling this premise.

Recent audits reveal growing evidence of Virtual Camera (VCAM) Injection — a technique that does not hack systems but replaces reality itself.

Instead of capturing photons from a physical lens, attackers inject a synthetic or prerecorded video stream directly into the application’s input pipeline.

From the system’s perspective:

- Camera permissions are valid

- Video feed exists

- Motion is detected

- Identity appears confirmed

Yet the camera is no longer a sensor.

It has become a programmable interface.

The implication is stark:

“Live video” is no longer proof of presence.

Identity verification becomes performance rather than assurance.

A Proposal to Apple Part 3 — Analysis Traces of “Structural Destruction” in iOS : How a Stolen…

2. The Authorization Gap — Identity ≠ Intent

Authentication confirms identity, but does not constrain execution. The Authorization Gap describes the structural separation between human intent and system action that emerges after successful authentication.

Modern security architecture rests on a flawed logical assumption:

If authentication succeeds, the action must be legitimate.

We have perfected answering:

Who are you?

But neglected verifying:

What are you actually trying to do?

I refer to this structural disconnect as the Authorization Gap.

Once authentication succeeds, most systems grant what can be described as open agency:

Verify_User()

Execute_Action()

There is no structural binding between:

- what the human believes they approve,

- and what the system actually executes.

The authenticated user becomes an unwitting authorization proxy.

In the Hong Kong deepfake case, identity validation succeeded perfectly.

Only intent was compromised.

The Authorization Gap: Why Identity Verification Alone is a Flawed Security Architecture

3. When Authentication Becomes a Liability

Paradoxically, stronger authentication can amplify risk.

Biometrics, passkeys, and device trust signals create high confidence that the correct user is present.

But confidence in identity silently substitutes for verification of meaning.

Attackers no longer need to defeat cryptography.

They only need to manipulate context.

Deepfake executives, persuasive AI agents, and adaptive social engineering exploit this gap by aligning authentication success with malicious intent.

Security fails not because defenses are weak — but because trust is granted too broadly after verification.

4. A Concrete Architectural Example: The iOS Trade-off

This structural issue appears even in highly secure consumer platforms.

Consider Apple’s LocalAuthentication framework.

Many applications rely on:

LAPolicy.deviceOwnerAuthentication

This policy allows biometric authentication but automatically falls back to the device passcode.

From a usability perspective, this ensures availability.

From an architectural perspective, it introduces equivalence:

- Secure Enclave biometric verification

- Knowledge of a 6-digit passcode

Both produce the same authorization outcome.

Applications often cannot distinguish which authentication source succeeded.

This is not a vulnerability.

It is a design trade-off.

Yet attackers increasingly exploit this equivalence to bridge device access into financial authorization.

The system verifies identity correctly — while losing assurance about intent.

5. The AI Multiplier — From Obsession to Routine

AI does not create new structural vulnerabilities; it removes the effort barrier that once limited their exploitation. What required weeks of expert obsession can now be executed automatically at machine scale.

Historically, exploiting such structural inconsistencies required extraordinary human effort:

- Mapping UI prompts to backend functions

- Reverse-engineering workflows

- Testing edge conditions

- Chaining individually legitimate operations

These attacks were rare because effort acted as a natural defense.

AI removes that defense.

Large language models and autonomous agents can now:

- analyze API documentation at scale,

- detect semantic inconsistencies,

- discover authorization edge cases,

- generate attack paths automatically.

What once required weeks of obsession now becomes milliseconds of routine computation.

The Authorization Gap did not suddenly appear.

AI simply made it visible — and exploitable at scale.

6. The Structural Solution — Intent Lock

The next evolution of security is not stronger authentication.

It is constraining execution to verified intent.

I describe this requirement as an Intent Lock.

An Intent Lock binds three elements into a single verifiable unit:

- The meaning presented to the user

- The user’s approval context

- The backend authorization request

Conceptually:

// Bind visible meaning to execution

intentPayload = {

action: "transfer",

amount: 25000000,

recipient: "0xABC"

}

secureContext = sign(hash(UI_context + intentPayload))

if verify(secureContext):

executeHighRiskAction()

Authorization becomes inseparable from meaning.

Even valid authentication cannot execute actions outside the approved intent scope.

Security shifts from identity verification to intent confinement.Conclusion

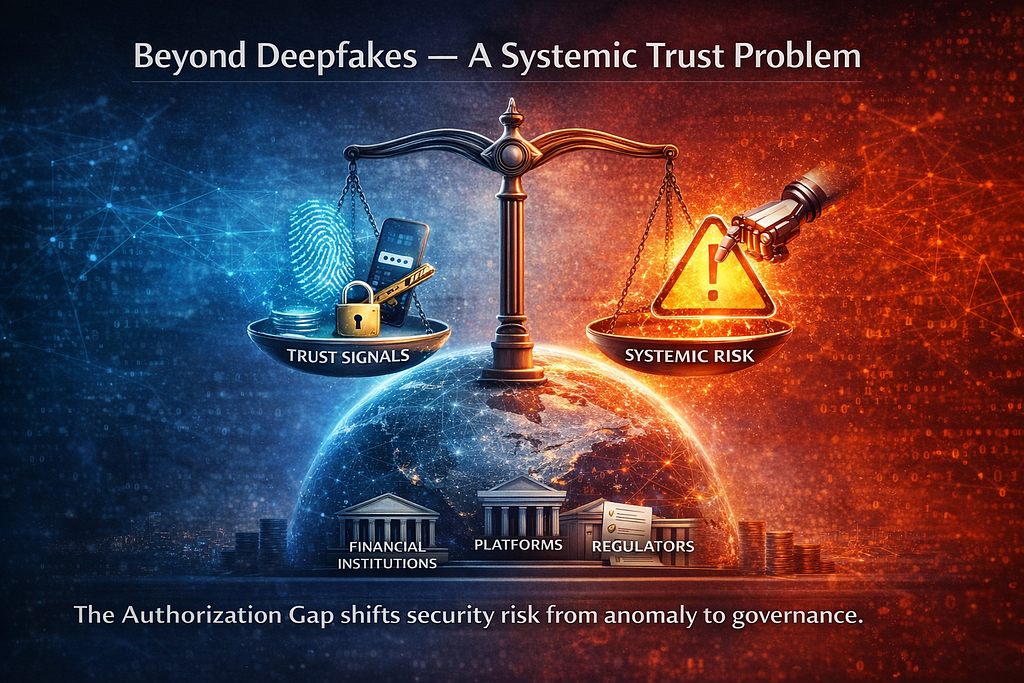

7. Beyond Deepfakes — A Systemic Trust Problem

As digital systems aggregate multiple trust signals into unified authorization decisions, security risk shifts from isolated technical exploits to systemic governance challenges.

The implications extend far beyond AI-generated video fraud.

Operating systems, financial platforms, and digital identity infrastructures increasingly aggregate multiple trust signals — biometrics, passcodes, device possession, behavioral analytics — into unified authorization decisions.

Yet these systems rarely guarantee that:

the action executed by machines matches the intention understood by humans.

As AI reduces the cost of discovering semantic mismatches, the Authorization Gap becomes a global systemic risk rather than an isolated vulnerability class.

For financial institutions, platform providers, and regulators, this shift represents not merely a security concern but a governance challenge.

8. A Continuing Architectural Investigation

The deepfake incident is not an anomaly.

It is an early signal of a broader transition in security thinking.

I explore this structural problem further through ongoing forensic analysis of mobile trust architectures, including a detailed examination of how modern operating systems balance usability, availability, and authorization assurance.

Further Reading — Architectural Case Study

A Proposal to Apple: Transforming the iPhone “6-Digit Passcode” into True Trust

A Proposal to Apple Part 1: Transforming the iPhone “6-Digit Passcode” into True Trust

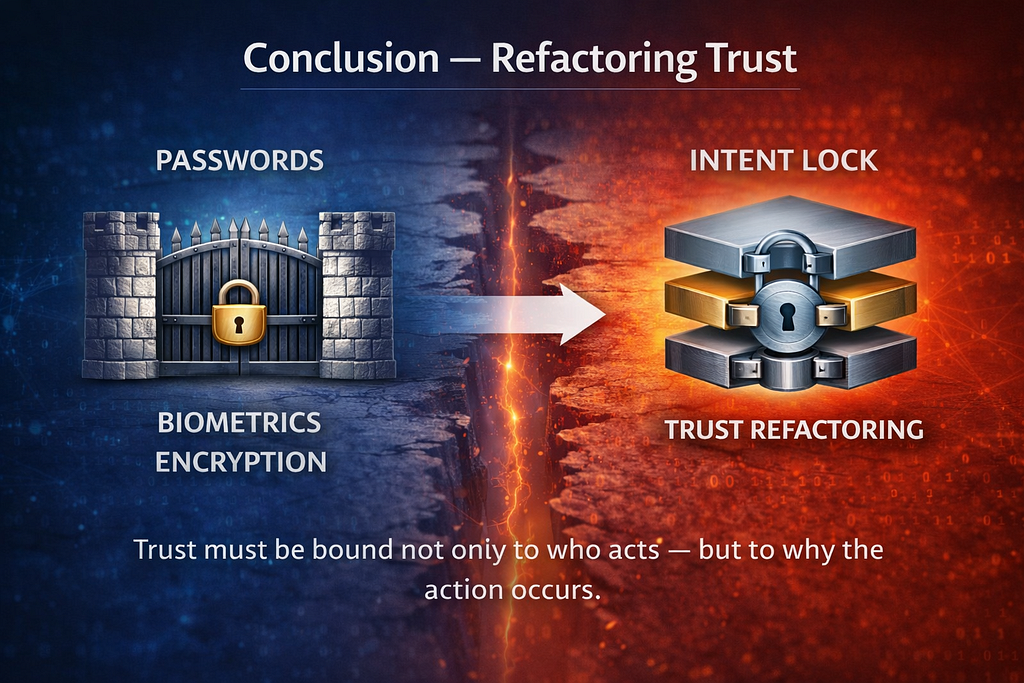

Conclusion — Refactoring Trust

For decades, security strengthened authentication gates. The next evolution shifts from verifying identity to constraining execution, binding authorization directly to human intent.

For decades, security evolved by strengthening the gate.

Better passwords.

Better biometrics.

Better encryption.

But the next generation of failures will not arise from broken authentication.

They will emerge from correctly authenticated actions executed with hijacked intent.

As AI transforms sophisticated attacks into routine processes, systems must evolve beyond verifying identity.

The future of digital security depends on one principle:

Trust must be bound not only to who acts — but to why the action occurs.

Until intent is structurally locked to authorization, every successful authentication may remain a potential point of failure.

About the author

Ryu360 is a system architect and digital forensics specialist

investigating structural failures in modern trust and authorization architectures.

His work examines how formally correct systems break under adversarial conditions,

with a focus on digital identity, authorization design, and hidden trust boundaries

within large-scale security frameworks.

Consultation / Research Collaboration:

👉 https://forms.gle/btGiwS9ZRc3XhZL37

The $25M Deepfake: When Successful Authentication Becomes the New Single Point of Failure was originally published in DataDrivenInvestor on Medium, where people are continuing the conversation by highlighting and responding to this story.