Stop Counting Stars: Proof-of-Spend and the Case for x402 as an Agent Trust Layer

SAL90008 min read·1 hour ago

SAL90008 min read·1 hour ago--

Every evaluation system humanity has built relies on the same hidden assumption: that judging something costs the judge something.

Over the past year, Claude Code’s ecosystem has exploded. Skills, hooks, subagents, MCP servers — — hundreds of community-built assets, curated in GitHub “awesome” lists and browsable marketplaces. And every one of these marketplaces evaluates assets the same way. Stars. Downloads. Thumbs up. The same metrics we have been recycling since the App Store launched in 2008.

The asset with 2,000 stars might be abandonware that happened to go viral once. The one with 12 stars might be the only thing that actually works for your use case. We know this. We have known it for years. But it did not matter much when humans were the ones browsing, because humans could compensate — — read the README, check the last commit date, skim the issues.

Agents cannot do this. An agent selecting a skill for a production workflow has no intuition, no social context, no ability to “sense” quality from tone or formatting. It needs a signal that is computationally verifiable and economically meaningful. And the signals we have been giving it — — stars, downloads, ratings — — are neither.

What PageRank understood, and what broke it

Google’s PageRank was one of the most elegant ideas in the history of information retrieval. The insight was deceptively simple: you do not need to read a web page to know if it is valuable. You just count who links to it.

This worked because a backlink was not free. A human had to find the page, spend finite time reading it, judge it worth referencing, and create the link. That time — — time that could have been spent on something else, time risked on content that might have been worthless — — was the hidden cost embedded in every link. PageRank did not evaluate content. It evaluated the accumulated risk-taking of humans who had evaluated the content.

Academic impact factor operates on the same principle. A citation means a researcher spent scarce career time reading a paper, decided it was relevant enough to reference, and staked a small piece of their professional reputation on that judgment. The citation graph does not measure truth. It measures the pattern of costly decisions made by people with skin in the game.

The underlying structure is the same in both cases: the signal is credible because the evaluator paid an irrecoverable cost. Time spent reading a worthless page is time you do not get back. A citation on a paper that turns out to be wrong is a small stain on your record. The signal works because it is a byproduct of genuine risk-taking.

And both systems are now breaking.

In 2025, AI-generated text surpassed human-written output for the first time. Brett Winton’s chart from ARK’s Big Ideas 2026 tells the story visually:

A study of 900,000 web pages found that 74% of newly created pages contained AI-generated content. Europol estimates that up to 90% of online content may be synthetically generated by 2026. Meanwhile, AI crawlers traverse the web at near-zero marginal cost, consuming pages that were themselves generated at near-zero marginal cost.

The backlink graph is becoming noise. When a link can be generated by a bot that never “read” anything, and the page it points to was written by another bot, the hidden cost that made PageRank work — — human time at risk — — has evaporated.

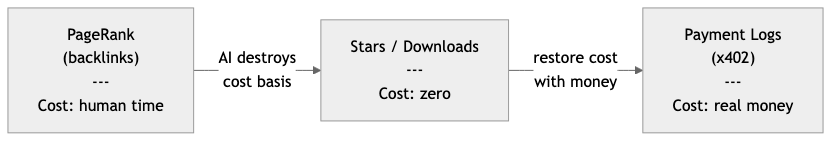

GitHub stars and download counts are already in this state. They cost nothing to produce, nothing to fake, and carry zero risk for the evaluator. They are PageRank after the bots arrived: a signal whose underlying cost basis has been destroyed.

The question, then, is what comes after PageRank. What meta-information can still reduce the entropy of evaluation when content is infinite and attention is no longer the bottleneck?

I think the answer is payment.

Not because money is inherently meaningful, but because payment is the last remaining signal where the evaluator bears genuine risk. An agent that pays $0.01 for a skill has made a decision that might be wasted. Multiply that by thousands of agents making thousands of such decisions, and you get a signal that is — — like early PageRank — — a byproduct of costly, irrecoverable commitments. You cannot game it without actually spending. You cannot inflate it without bearing real cost. And unlike stars or links, the cost basis cannot be driven to zero by automation, because the money is the cost.

In information-theoretic terms: in a world where content is abundant and evaluation signals are cheap, entropy rises. The only signal that can systematically reduce that entropy is one where producing the signal requires destroying something scarce. Payment destroys purchasing power. That destruction is what makes it informative.

Proof-of-Spend: payment as a trust primitive

PageRank derived trust from the cost of human time. Impact factor derived trust from the cost of academic attention. Both were indirect — — the cost was a side effect of consumption, not a deliberate signal. Payment makes this structure explicit.

I am calling this Proof-of-Spend. When an agent pays for a skill, a cost is incurred that the agent cannot recover if the skill turns out to be useless — — the same irrecoverable commitment that made backlinks and citations work. But unlike time or attention, money leaves a receipt. On a blockchain, that receipt is immutable, timestamped, and publicly verifiable. No one can retroactively inflate or deflate it. And the receipt encodes a decision: “Given my context, my budget, and my alternatives, I chose this.” Aggregate thousands of such decisions across thousands of agents, and a reputation emerges that no one designed and no one controls.

This is not a new rating system bolted on top of existing marketplaces. It is a different primitive entirely — — one where trust is a byproduct of economic activity rather than a separate layer.

Designing a non-custodial skill market

The critical design question is: who touches the money?

If the marketplace handles funds — — escrow, splitting, distribution — — it becomes a financial intermediary. Depending on jurisdiction, that means licenses, compliance obligations, and custodial risk. The marketplace becomes a chokepoint, and the permissionless nature of the system collapses.

The answer is to build the marketplace as a pure observer.

Here is how x402 makes this possible. The protocol is simple: a server returns HTTP 402 (Payment Required) with payment conditions. The client pays directly — — wallet to wallet — — and resubmits the request with a transaction receipt. The server verifies and grants access. No intermediary holds funds at any point.

Applied to a skill marketplace:

The marketplace never holds keys, never custodies funds, never executes transactions. It does three things: discovery, challenge, and observation. This is not a financial service. It is a directory with a payment-gated door, where the door’s lock is the blockchain itself.

What reputation looks like in this model

In a star-based system, reputation is a popularity contest. In a payment-based system, reputation is a decision log.

Consider what becomes observable:

- Frequency: How often is this skill paid for? Is demand sustained or a one-time spike?

- Recurrence: Do the same agents come back? Retention is the hardest signal to fake.

- Diversity: Is this skill used by one agent or thousands? Breadth indicates generality.

- Context: What other skills does the paying agent use? Co-purchase patterns reveal quality signals that no rating system can capture.

- Amount: Are agents paying the asking price, or does the creator keep lowering it? Price dynamics encode market sentiment.

None of these signals require trust in a central authority. They are all derived from on-chain payment data that anyone can independently verify.

There is an important subtlety here. This is not “pay-to-rank.” A skill that charges $10 does not automatically outrank one that charges $0.01. The signal is in the pattern of decisions, not the magnitude of any single payment. A $0.001 skill that gets paid 50,000 times by 3,000 distinct agents is demonstrating something that no amount of stars can express.

Why this matters beyond skills

Nothing about Proof-of-Spend is specific to AI coding tools. API endpoints, data feeds, model weights, verification services, oracle queries — — anywhere an agent makes a “should I use this?” decision, the same logic applies. If the agent paid, and the payment is verifiable, you have a trust signal that no amount of stars can match.

This is the inverse of advertising. Advertising pays to capture attention. Proof-of-Spend pays to access value. In an agent economy where attention does not exist as a resource — — agents have compute budgets, not eyeballs — — advertising has no surface to attach to. What remains is direct exchange: value delivered, value paid, value recorded.

To be clear: I am not proposing that every skill must cost money. Free and open-source assets are essential and should remain free. The argument is narrower — — when evaluation signals are needed, payment-based signals are strictly more informative than cost-free signals. A free skill with zero payment history is not penalized; it simply exists outside the reputation layer. And none of this replaces human judgment. Humans will keep curating and recommending. But the ratio of agent-to-agent transactions to human-to-agent transactions is growing, and machines need signals that machines can verify.

What this does not solve

If payment becomes the dominant trust signal, it creates a new question: what about good assets that never accumulate payment history? A creator without initial capital to subsidize early adoption might never reach the threshold of visibility. Proof-of-Spend solves the honesty problem of evaluation, but it does not automatically solve the access problem. Progressive pricing, trial periods, reputation bootstrapping — — these are real design challenges, not hand-waving.

But they are engineering problems. The deeper issue — — that cost-free signals lose all information content in a world of infinite content and zero-cost evaluation — — is structural. No amount of better star-counting fixes it.

The infrastructure for agent payments exists today. x402 is live. Agent wallets are live. The skill economy is growing. What is missing is not technology but a shared recognition that the transactions agents are already making could be the most honest reputation system we have ever had.

I am not building this. But I believe someone will, and when they do, stars will feel as quaint as PageRank in the age of bots.

I have published a protocol sketch on GitHub. Fork it. Break it. Build something better.

I am a contributor to the x402 protocol, maintained by the x402 Foundation under the Linux Foundation. Views expressed here are my own.