Member-only story

Art of Autoscaling in Kubernetes

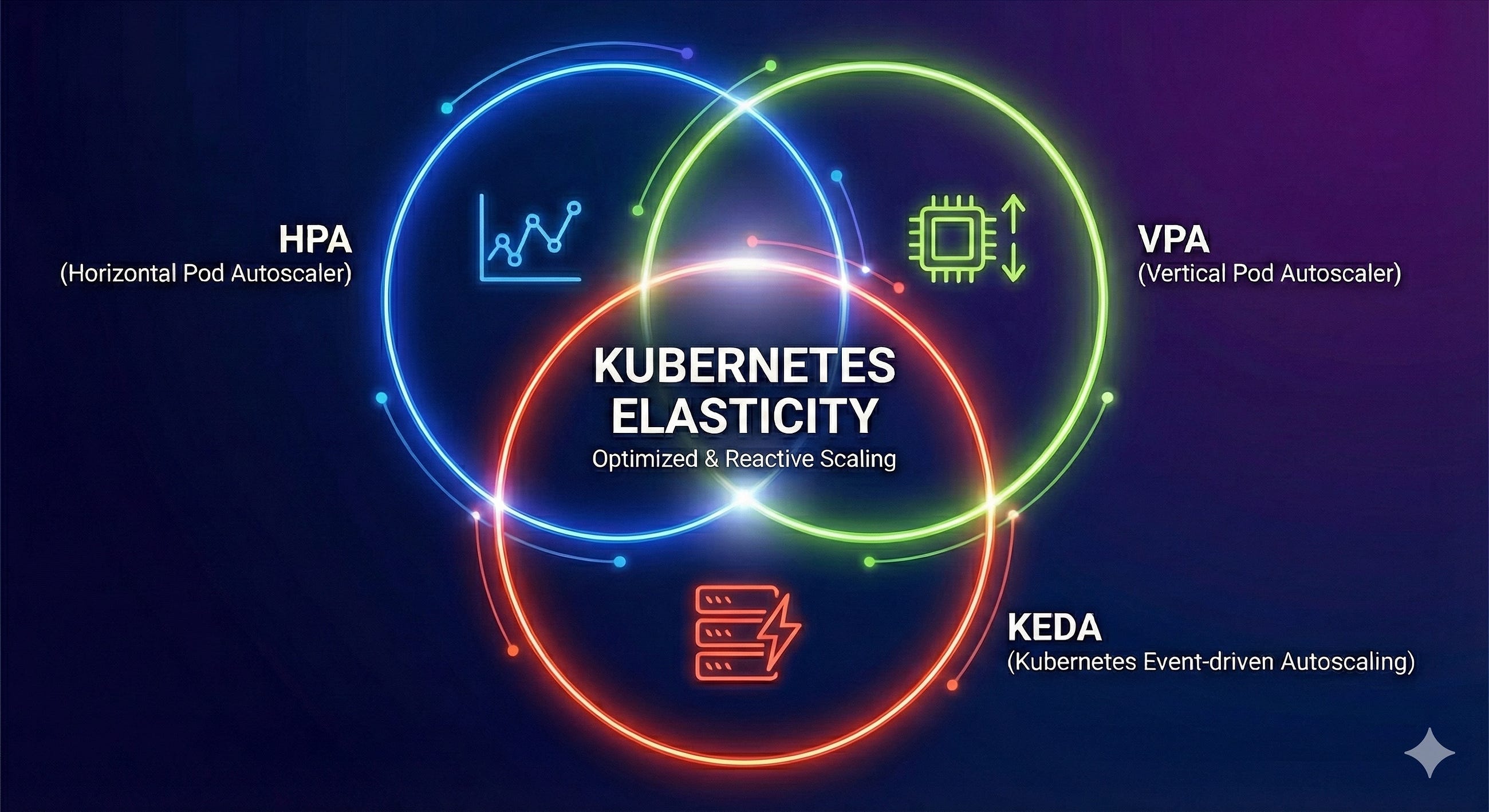

Master the three pillars of elasticity to build a cluster that scales with intelligence, not just brute force

Mohit Malhotra5 min read·Just now

Mohit Malhotra5 min read·Just now--

Most engineering teams start their Kubernetes journey with a simple goal: “Make it scale.” They enable the Horizontal Pod Autoscaler (HPA), set a CPU target, and assume the job is done. While HPA is the indispensable foundation of cloud-native elasticity, relying on it effectively requires understanding its boundaries.

A truly resilient production environment doesn’t just “add more pods.” It right-sizes them dynamically and reacts to events before they become outages. To achieve this, one must orchestrate the three distinct operators of Kubernetes scaling: HPA (The Scaler), VPA (The Right-Sizer), and KEDA (The Event-Driven Trigger).

Horizontal Pod Autoscaler (HPA)

HPA is the bread and butter of Kubernetes scaling. It is the default mechanism for handling variable traffic patterns in stateless applications. Think of it as a highway manager that opens more lanes when traffic slows down.

How it works

The Kubernetes Controller Manager runs a control loop (defaulting to every 15 seconds). It queries the Metrics Server for resource usage (like CPU or Memory)…